Naver, the largest search engine and tech giant in Korea, sits at the center of the country’s digital ecosystem. From ecommerce and digital payments to blogs and news, it connects massive user traffic and data across multiple verticals. In Korea, the true traffic gateway is not Amazon, but Naver.

If you want to reliably and scale the collection of Naver Shopping data, you need a systematic approach. This guide breaks down practical strategies to help you scrape Naver platform data quickly and cost-effectively, under compliant conditions, so you can make better business decisions.

If you operate in the Korean market but rely only on Google, Amazon, or global tools for data, you are seeing peripheral signals rather than real local demand. Scraping Naver means accessing native Korean data context. In Korea, Naver functions as:

- Ecommerce entry point

- Content distribution hub

- Blog and community aggregation platform

- News platform

- Core channel for local brand awareness

By scraping Naver search results, product listings, blogs, forums, and news content, sellers can:

- Identify Korean keyword rankings and optimize SEO for ecommerce websites

- Analyze competitor pricing, sales volume, and promotion strategies

- Extract user reviews and forum discussions to uncover consumer preferences, pain points, and emerging trends

Naver data scraping supports product selection, pricing, and marketing strategies, helping ecommerce sellers stay competitive in the Korean market.

Naver organizes content into multiple vertical sections, each with its own URL patterns and DOM structures. Planning is required before scraping. Major sections include:

Search results:

Naver’s core search function returns web pages, images, videos, and ecosystem-native content blocks. Unlike Google, Naver heavily integrates its own services into search results. Scraping search pages allows sellers to collect competitor data, keyword rankings, and traffic signals directly.

News section:

Aggregates articles from hundreds of Korean media outlets and updates in real time. For ecommerce sellers, it is important for monitoring brand exposure, market trends, and industry developments.

Blog platform:

A highly active blogging ecosystem where users share experiences, product reviews, and professional insights. Blog data helps analyze consumer preferences and identify emerging trends.

Before collecting data, clearly define your scraping targets and parsing logic to improve efficiency and data accuracy.

Step 2:Prepare the Technical Environment

Naver pages are structurally complex and contain large amounts of Korean text. Stable requests and accurate parsing are essential. Set up a basic Python scraping environment first.

1.Install common scraping libraries

pip install requests beautifulsoup4 lxml urllib3These libraries handle different tasks:

- BeautifulSoup for parsing HTML structures

- lxml for improved parsing speed and stability

- urllib.parse for handling Korean keyword URL encoding

2. Import base modules

import requests

from bs4 import BeautifulSoup

import urllib.parse

import time

import random

from typing import Dict, List, Optional3. Korean text and encoding preprocessing

Although Python 3.x supports Unicode by default, scraping Naver still requires attention to:

- Zero-width characters

- HTML entity encoding

- BOM characters

- URL encoding and decoding issues

Without preprocessing, data storage, keyword matching, and sentiment analysis may be affected.

4.Risk control and request pacing

When scraping in batches, do not ignore request frequency and proxy risk control. Naver may restrict abnormal access behavior. Use rotating proxy solutions or randomized request intervals to maintain stability.

5.Create a Dedicated Naver Session

- Create session

Naver evaluates request headers, language preferences, and connection behavior to determine traffic legitimacy. Default request settings are easily flagged as abnormal. You need to simulate a real Korean browser environment.

import requests

def create_naver_session() -> requests.Session:

"""Create a requests session optimized for Naver scraping."""

session = requests.Session()

# Headers that mimic a Korean browser user

session.headers.update({

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 "

"(KHTML, like Gecko) Chrome/119.0.0.0 Safari/537.36",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8",

"Accept-Language": "ko-KR,ko;q=0.9,en;q=0.8",

"Accept-Encoding": "gzip, deflate, br",

"Connection": "keep-alive",

"Upgrade-Insecure-Requests": "1",

"Cache-Control": "max-age=0",

})

return sessionKey points:

- Set Accept-Language to prioritize Korean to ensure complete localized content

- Use a common browser User-Agent to avoid being identified as a script

6. Build a stable request mechanism

Implement retry logic in your request workflow to improve reliability.

import time

import random

from typing import Optional

def get_page_safely(session: requests.Session, url: str, max_retries: int = 3) -> Optional[str]:

"""Fetch a page with retry logic and proper error handling."""

for attempt in range(max_retries):

try:

# Random delay to avoid appearing bot-like

time.sleep(random.uniform(1, 3))

response = session.get(url, timeout=30)

if response.status_code == 200:

# Ensure proper encoding for Korean text

response.encoding = response.apparent_encoding or 'utf-8'

return response.text

elif response.status_code in (403, 429):

print(f"Blocked by Naver (status {response.status_code})")

return None

elif response.status_code == 404:

print(f"Page not found: {url}")

return None

except requests.RequestException as e:

print(f"Request failed (attempt {attempt + 1}): {e}")

if attempt < max_retries - 1:

time.sleep(random.uniform(2, 5))

return None1.Build search URLs

Encode Korean keywords properly. Pagination follows the pattern start=1,11,21 and so on.

2.Request pages

Use the configured session to access search URLs.

3.Parse results

Extract from each result block:

- Title

- Link

- Description

- Source site

Step Five:Text Processing

Clean Korean text

- Remove extra spaces

- Remove special characters

- Prevent encoding errors

Standardize data structure

Convert all results into a unified structure (dictionary/JSON) for database storage or further analysis.

Batch keyword scraping

- Loop through multiple keywords

- For each keyword, scrape both search and news sections

- Insert delays between requests to avoid excessive frequency

Aggregate results

Store results categorized by keyword and calculate total counts.

1.Build a Stable Session Mechanism

High success rates depend on behaving like a real user. Naver evaluates navigation paths, dwell time, and page transitions to detect abnormal traffic. Isolated, repetitive requests are easily flagged.

Optimization strategies:

- Use persistent sessions

- Simulate realistic browsing flows (search → click → pagination)

- Maintain reasonable dwell time

2.Control Request Frequency

Large volumes of requests in a short period can trigger 429 rate limits or 403 blocks. Instead of aggressive scraping:

- Set randomized delays

- Control proxy request frequency

- Execute tasks in batches

- Use High-Quality Rotating Proxies

Proxy quality directly affects scraping success. Naver analyzes IP geolocation, historical behavior, and access patterns. Frequent reuse of a single IP or abnormal data center IPs increases detection risk.

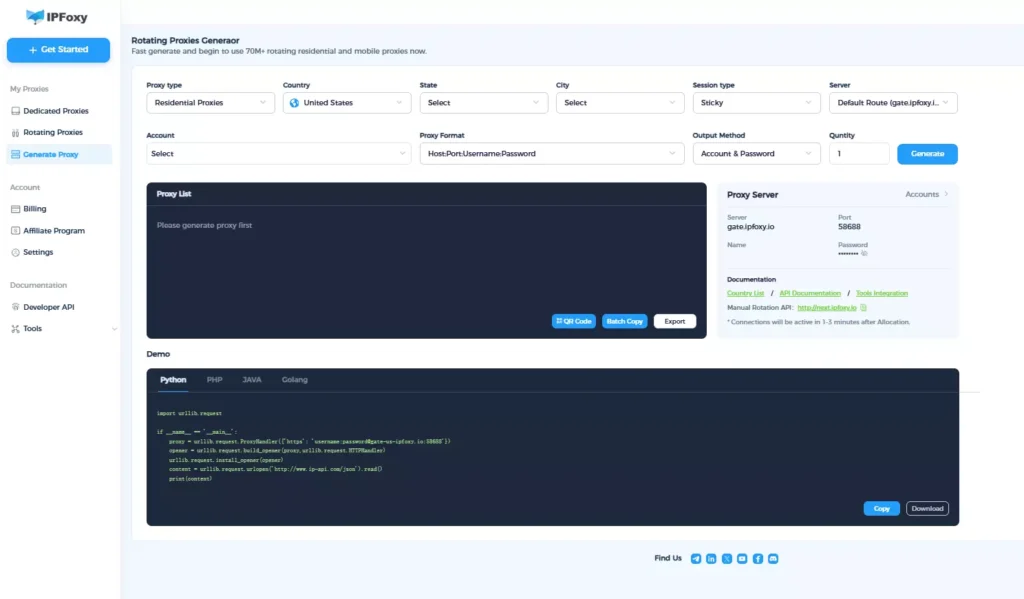

In practice, rotating residential proxy solutions are integrated into scraping workflows to reduce single-IP exposure. IPFoxy provides rotating proxy services that support both API integration and demo code integration for scraping workflows.

Here are the example:

import urllib.request

if __name__ == '__main__':

proxy = urllib.request.ProxyHandler({'https': 'username:password@gate-us-ipfoxy.io:58688'})

opener = urllib.request.build_opener(proxy,urllib.request.HTTPHandler)

urllib.request.install_opener(opener)

content = urllib.request.urlopen('http://www.ip-api.com/json').read()

print(content)

Through the rotating proxy dashboard, Korean rotating residential or mobile IPs can be generated, supporting automatic IP switching by request or by time interval. In batch scraping scenarios, this approach helps maintain access stability while reducing risk control triggers.

Common causes include JavaScript-rendered content, improperly configured request headers, delayed loading modules, or incorrect pagination handling. Check for asynchronous content, ensure Korean language priority, and verify pagination parameters.

Naver relies on behavioral modeling rather than request count alone. Continuous high-frequency access from a fixed IP, lack of session continuity, or unrealistic browsing behavior can lead to blocking. Control proxy frequency, add random intervals, simulate user behavior, and use rotating residential proxy rotation to reduce risk.

When keyword volume exceeds 100, the challenge shifts from feasibility to stability and scalability. Recommended strategies include batch execution, task queues, assigning different proxies to keyword groups, and combining rotating proxy mechanisms to improve overall efficiency.

Conclusion

In the Korean market, Naver is both a traffic gateway and a signal of consumer trends. As ecommerce continues to expand, businesses that gain earlier access to real local data gain competitive advantage. Stable Naver Shopping and content scraping is not just a technical task but a strategic capability. Building strong data infrastructure early ensures long-term competitiveness in the Korean ecommerce landscape.