In the SEO and data-driven landscape of 2026, Google remains the world’s most valuable source of search intelligence. Whether you’re tracking keyword rankings, analyzing SERP features, or monitoring market trends at scale, access to accurate Google search data is now a core business requirement.

At the same time, Google’s anti-bot and anti-automation systems have become far more advanced than ever before. How can you collect reliable Google search data and competitor insights without triggering CAPTCHAs or getting blocked?

This guide breaks down how Google detects automated traffic and provides a proven proxy configuration strategy to help you perform search data collection tasks more efficiently, securely, and consistently in 2026.

I.How Does Google Detect and Block Non-Human Traffic?

Before building any scraping infrastructure, it’s critical to understand Google’s defense system. Google no longer relies on simple IP filtering — it operates a real-time, multi-dimensional risk scoring engine.

1. IP Reputation & ASN Signals

Google evaluates both the IP reputation and the ASN (Autonomous System Number) behind the traffic. IP ranges from cloud providers such as AWS or Azure are more likely to be flagged, especially if those ranges have a history of abuse. Even if the IP itself is not blacklisted, a data center ASN can significantly reduce trust scores.

2. Request Frequency & Traffic Patterns

Google closely monitors request behavior from individual IPs and subnets. High-frequency requests, perfectly timed intervals, or sudden traffic spikes can quickly trigger CAPTCHAs or temporary bans. For example, sending requests exactly every 3 seconds is a classic automation pattern that is easy to detect.

3. Geolocation Consistency

Google search results are heavily location-dependent. If the IP location does not match the search intent — for example, using a German IP to search for “New York pizza delivery” — the request may immediately raise suspicion.

4. Browser & Device Fingerprinting

Google analyzes hundreds of fingerprinting signals including TLS fingerprints (JA3/JA4), HTTP/2 settings, font lists, WebGL parameters, and browser headers. Repeated or incomplete headers and mismatched user agents can easily expose automation traffic.

5. CAPTCHA & Soft Blocking Mechanisms

Instead of immediately blocking traffic, Google often applies gradual restrictions such as CAPTCHA challenges, reduced search depth, or temporary rate limiting. This “soft wall” approach makes detection harder because many scrapers only notice issues when data quality drops or response delays suddenly increase.

II.Core Use Cases for Google Proxies in 2026

Google’s ecosystem includes Search, Ads, Maps, Shopping, and many other services, each with different access patterns and security mechanisms. Occasional manual searches may not require proxies, but once operations scale up, proxy infrastructure becomes essential.

Below are the five most common Google proxy use cases in 2026:

1. SERP Monitoring & Rank Tracking

SEO platforms and marketing teams continuously monitor keyword rankings across different regions and devices. Without proxies, large-scale queries quickly trigger rate limits or CAPTCHAs. Proxy IPs distribute requests across multiple locations, allowing teams to collect stable and localized SERP data.

2. Large-Scale Keyword Research

Keyword research often involves thousands of related search queries for analyzing search volume, long-tail opportunities, and competition difficulty. This behavior differs significantly from normal user activity. Rotating proxy pools help distribute traffic loads and reduce the risk of detection during long-term keyword collection.

3. Local SEO & Geo-Targeted Search Simulation

Google personalizes search results heavily based on location. Businesses that need to evaluate rankings from country level down to neighborhood level must use IP addresses that accurately match target regions. Precise geo-targeted proxies are essential for reproducing authentic local search results.

4. Google Ads Monitoring & Ad Verification

Advertisers and agencies often monitor ad placements, creatives, and competitor campaigns across multiple regions. Google Ads systems are extremely sensitive to repetitive requests from the same IP. Proxies help verify ad visibility while avoiding fraud detection triggers or impression distortions caused by repeated manual checks.

5. Brand Monitoring & Market Research

Brands continuously track product visibility, search exposure, and competitor listings across Google Search and Google Shopping. These workflows involve frequent automated checks that can quickly exceed normal access thresholds. Rotating residential proxies support uninterrupted monitoring and long-term market trend analysis.

III.How to Choose the Right Proxy Type for Google?

Because Google relies on multi-layered detection systems, proxy selection matters more than ever in 2026. Common proxy types include residential proxies, data center proxies, and mobile proxies.

Reliable global proxy providers such as IPFoxy offer multiple proxy types designed for different Google-related use cases.

Below is a practical comparison for Google scenarios:

| Proxy Type | IP Reputation (ASN) | Geo Accuracy | Fingerprint Compatibility | Google Blocking Risk | Recommended Use Cases |

| Data Center Proxies | Very Low (Cloud ASN) | City-level (inaccurate) | Poor | Extremely High | Not recommended for Google |

| Static Residential Proxies | High (Real ISP) | City-level | Good | Low | Local rank tracking, SEM |

| Rotating Residential Proxies | Very High (ISP Pool) | City-level | Excellent | Very Low | Large-scale SERP scraping |

| Mobile Proxies | Very High (Carrier ASN) | Regional-level | Excellent | Low | Mobile SEO testing |

IV.Google Proxy Setup Guide: How to Reduce Blocking Risks During Google Search Scraping

1、Proxy Configuration

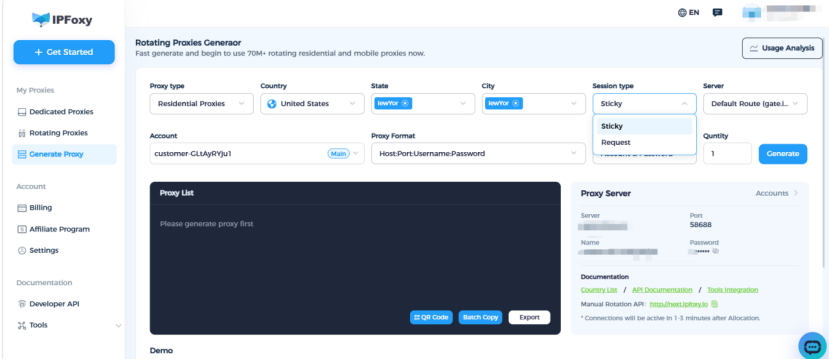

This tutorial uses IPFoxy static residential proxies as an example. First, you’ll need the standard proxy credentials, including IP address, port, username, and password.

Step 1: Choose the Right Proxy Type

Select the proxy type that fits your business scenario based on the recommendations above. For Google scraping tasks, rotating residential proxies are generally preferred because they allow you to customize IP rotation intervals, target regions, and protocol settings.

Step 2: Configure Your Environment

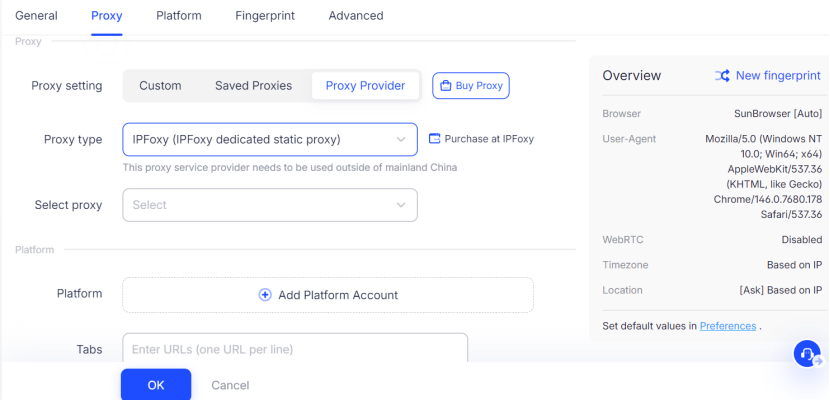

- Anti-detect Browsers:

Fingerprint browsers help eliminate TLS fingerprint inconsistencies and browser identity issues. In Google scraping environments, they can significantly reduce detection risks. For example, browsers like AdsPower can directly authorize IPFoxy proxies without requiring manual configuration.

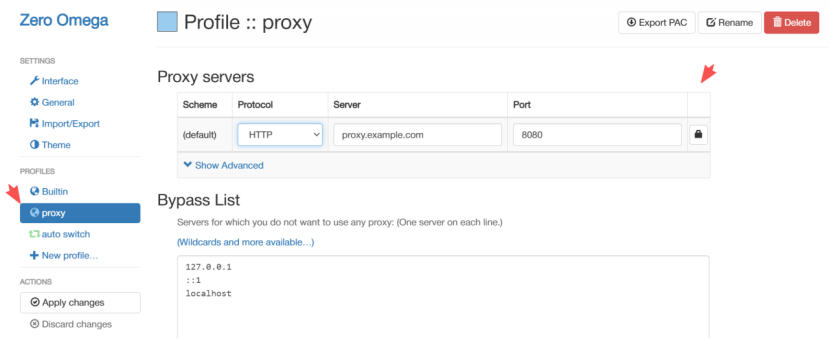

- Chrome Extensions:

For lightweight browsing tasks, extensions such as SwitchyOmega or FoxyProxy can be used. However, browser extensions only modify the network layer and cannot fully mask browser fingerprints, making them unsuitable for advanced automation workflows.

2、Control Request Rates & Concurrency

This is one of the most important strategies for avoiding bans. Use randomized intervals instead of fixed request frequencies, and keep per-IP request volume low.

- Avoid Fixed Timing Patterns:

Never send requests at exact intervals like every 3 or 5 seconds. Instead, use randomized Gaussian-style delays with an average of 15 seconds and a variance of 3–5 seconds to mimic human behavior. - Prevent Traffic Spikes:

Use task queues instead of aggressive multi-threaded requests. Ideally, maintain only one concurrent connection per static residential IP. - Add Cooldown Periods:

After every 50–100 requests, pause for 60–120 seconds to simulate natural browsing breaks.

Many scraping projects fail not because the proxies are poor, but because the traffic behavior is too aggressive.

3、Match Geolocation With Search Intent

Google cross-checks IP geolocation against query semantics. If you are searching for “London Best Hotels,” your proxy should ideally originate from London, UK. Strong geographic consistency can significantly reduce suspicion scores.

4、Simulate Real User Behavior

Google increasingly relies on behavioral analysis. If every session opens and closes instantly, the traffic pattern becomes highly suspicious.

- Referer Simulation:

Avoid sending every request directly to result pages. Simulate navigation from the Google homepage into search results. - Enable Cookie Persistence:

Use realistic cookie sessions so Google recognizes the traffic as coming from a returning user with browsing history.

5、Continuously Monitor & Adjust

Google’s detection systems evolve constantly. By monitoring response codes, block rates, and CAPTCHA frequency, teams can identify problems early and adjust strategies before disruptions escalate.

Stable Google data collection is an ongoing optimization process — not a one-time setup.

V .FAQ

A: Your browser fingerprint (such as Canvas or WebGL signals) may still be detectable, or the IP range itself may already be flagged. Consider switching to higher-trust rotating residential proxies from IPFoxy and resetting the browser environment.

A: Strongly not recommended. Free proxies are typically low quality, heavily abused, and often blocked within seconds. They also introduce serious security and privacy risks.

A: Collecting publicly available data for research or business analysis is generally considered acceptable as long as it does not involve intrusive bypassing techniques or sensitive personal information.

Conclusion

In 2026, successful SEO scraping is no longer about generating massive request volume — it’s about staying undetectable.

By combining high-quality proxies with proper traffic management and browser fingerprint strategies, you can significantly improve the success rate of Google scraping operations.

Following the techniques in this guide will help you reduce blocking risks while maintaining stable, long-term workflows for rank tracking, SERP monitoring, and SEO data collection.