In 2026, competition among AI models has long shifted from “algorithm performance” to “data ownership.” Whether you are training domain-specific large language models (LLMs) or building specialized AI assistants, high-quality, large-scale, real-time web data is the essential fuel.

However, as anti-bot systems have become fully AI-driven, the barrier to data collection has reached an unprecedented level. Many teams get stuck at the “AI data collection” stage:

- Low success rate

- Incomplete data

- IP bans as soon as scale increases

- Even system crashes

The issue is usually not about knowing how to scrape, but relying on traditional crawling logic instead of a data collection architecture designed for the AI era.

I. Why AI Data Collection Is Becoming More Difficult

1. Explosive growth in AI data demand

With the rapid expansion of vertical AI applications, the demand for high-quality unstructured data has grown exponentially. Traditional public datasets are already exhausted. Modern AI training requires fresh data from social media, e-commerce dynamics, and niche forums. This surge in demand has turned data sources into a strategic battleground.

2. Upgraded anti-bot mechanisms

Modern websites no longer rely on simple blacklists. Instead, they deploy AI-driven risk control engines such as Cloudflare Turnstile and DataDome.

Behavior fingerprinting: AI systems analyze TLS fingerprints, mouse movements, and even typing patterns in real time.

Captcha evolution: Traditional OCR solutions are no longer effective. New-generation captchas can accurately detect bots attempting to mimic human behavior.

3. High-concurrency scaling challenges

AI training requires hundreds of millions or even billions of tokens, which demands extremely high concurrency. Under such conditions, IP lifetimes become very short, distributed node management becomes complex, and any failure in rotation, interval control, or retries can result in large-scale bans.

II. 7 Common Reasons Your AI Data Collection Fails

In 2026, frequent crawler failures usually come down to the following mistakes:

1.IP reuse

Reusing the same IP in high-frequency tasks signals “bot behavior” to detection systems. This often leads to temporary bans, captchas, or 403 responses.

2.Using datacenter IPs to simulate real users

Datacenter IPs are almost instantly blocked by major platforms. Without ISP backing, they fail AI-based environment validation and are already flagged in most risk control systems.

3.Overly regular request patterns

Fixed intervals (e.g., every 2 seconds), predictable user-agent rotation, or scheduled activity patterns are unnatural and easily detected by systems like DataDome.

4.Ignoring browser fingerprints

Even if the IP changes, unchanged TLS or Canvas fingerprints can still link requests to the same device.

5.Uncontrolled concurrency

Excessive concurrent requests can trigger protection mechanisms, resulting in IP range bans. A reasonable limit (typically 1–5 QPS per IP) and distributed queue systems are essential.

6.Data gaps (success rate issues)

Ignoring success rate leads to incomplete datasets when large numbers of requests return errors like 403 or 503.

7.Lack of retry mechanisms

Failing to retry requests (timeouts, 429, 5xx) results in missing data. Implement exponential backoff (e.g., 1s, 2s, 4s, up to 3–5 attempts) and switch IPs when encountering blocks or captchas.

III. Key Strategies for Large-Scale AI Data Collection

To achieve a success rate above 99%, you need a full-stack system covering IP infrastructure and behavioral simulation.

1.Shift to Residential proxies

AI data collection requires residential proxies. These IPs originate from real household networks, making requests appear as if they come from real users rather than data centers.

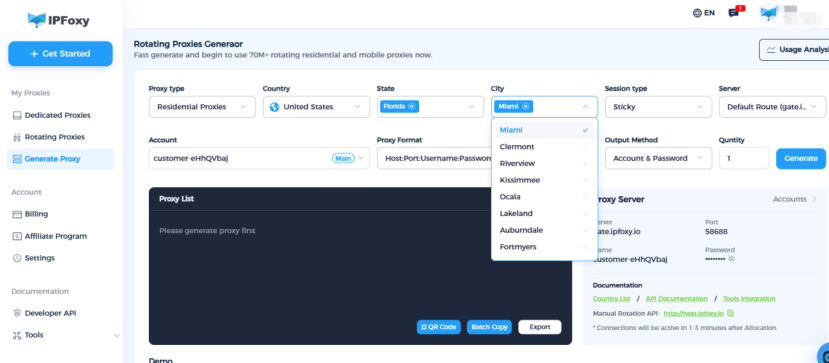

For large-scale scraping teams, a high-concurrency and clean proxy pool is essential infrastructure. For example, dedicated static residential proxy solutions from IPFoxy are sourced from real ISP networks and support precise geo-targeting at the country and city level. By integrating with scraping scripts, proxy rotation can be automated, helping bypass geo-restrictions and significantly improve success rates.

Example Python integration:

import urllib.request

if __name__ == '__main__':

proxy = urllib.request.ProxyHandler({

'https': 'username:password@gate-us-ipfoxy.io:58688',

'http': 'username:password@gate-us-ipfoxy.io:58688',

})

opener = urllib.request.build_opener(proxy, urllib.request.HTTPHandler)

urllib.request.install_opener(opener)

content = urllib.request.urlopen('http://www.ip-api.com/json').read()

print(content)2.Simulate real human behavior

Anti-bot systems rely heavily on behavioral statistics. Bots tend to have low variance, while human behavior is naturally random.

- Random delays using Gaussian distribution:

import time

import numpy as np

def human_like_delay(min_sec=0.5, max_sec=3.0):

mean = (min_sec + max_sec) / 2

std = (max_sec - min_sec) / 4

delay = np.random.normal(mean, std)

time.sleep(max(min_sec, min(delay, max_sec)))- Mouse movement simulation with Playwright:

from playwright.sync_api import sync_playwright

import random

import time

def human_mouse_move(page, target_x, target_y):

start_x, start_y = page.mouse.position

steps = random.randint(20, 40)

for i in range(1, steps + 1):

t = i / steps

ease = 1 - (1 - t) ** 3

current_x = start_x + (target_x - start_x) * ease + random.uniform(-2, 2)

current_y = start_y + (target_y - start_y) * ease + random.uniform(-2, 2)

page.mouse.move(current_x, current_y)

time.sleep(random.uniform(0.005, 0.015))

with sync_playwright() as p:

browser = p.chromium.launch(headless=False)

page = browser.new_page()

page.goto("https://example.com")

human_mouse_move(page, 300, 400)

page.click("selector")4. Build intelligent retry and rotation systems

A single IP cannot support large-scale scraping. You need a closed-loop system of detection, rotation, and retry.

- Automatic rotation: switch to a new IP when specific status codes are detected.

- Success rate monitoring: dynamically route traffic to the best-performing IP segments.

5.Deep fingerprint isolation

Modern systems like DataDome and Akamai analyze TLS handshake features, JA3 fingerprints, and HTTP/2 frame patterns.

- Fingerprint isolation: ensure each concurrent request has a unique TLS stack using SOCKS5 with tools like Playwright or Puppeteer.

- Stealth transmission: SOCKS5 provides lower-level, more private data transfer, improving both stealth and performance for large-scale data collection.

IV. FAQ

Q1:Why are rotating residential proxy better than static proxies for AI data collection?

A1: Rotating residential proxy allow IP rotation per request, making each request appear as a different real user. This avoids rate limits tied to single IPs. Static proxies are better suited for long-term sessions like account management.

Q1:How can I tell if my proxy is being detected?

A1:Common signals include frequent 403, 429 errors, or redirects to captcha pages, indicating reduced IP trust or detection.

Q1:What are the benefits of SOCKS5 for AI data collection?

A1:SOCKS5 operates at a lower level than HTTP, does not parse traffic, and supports encrypted forwarding. It reduces detection risk and improves performance for large-scale data such as images and streaming content.

Summary

AI data collection in 2026 is no longer about simply running a crawler. Most failures come down to five key factors: IP quality, behavioral patterns, fingerprint management, concurrency control, and fault tolerance.

One principle stands out: stability matters more than speed. Only a stable data pipeline can continuously deliver high-quality training data for AI models.