In recent years, competition among large language models has shifted from algorithms to data. Models like GPT-5, Gemini 3, and Claude 4 all rely on massive, diverse, high-quality datasets. The scale and quality of data directly determine model performance.

At the same time, anti-scraping systems have rapidly evolved. What you face today is no longer occasional IP blocking, but systematic detection powered by AI. As platforms such as Reddit, Stack Overflow, and X continue upgrading their defenses, traditional scraping methods are becoming ineffective.

This guide explains how to build a scalable and stable data collection system using proxies.

I. Why Your LLM Data Collection Keeps Getting Blocked

1、IP behavior anomalies

Anti-scraping systems focus on behavior patterns rather than the IP itself. Common triggers include:

- High-frequency requests from a single IP

- Perfectly regular request intervals

- Continuous 24/7 activity

These patterns quickly lead to IP bans or rate limiting (HTTP 429). Even with new IPs, unchanged behavior will be flagged again.

2、Data center IPs are heavily monitored

Cloud IPs from AWS, GCP, or Azure are widely recognized and labeled as low-trust. Platforms such as Amazon, eBay, Reddit, X, Medium, and Quora often block or challenge these IPs by default.

3、Browser fingerprint inconsistency

Modern systems analyze more than IP:

- Static or unrealistic User-Agent

- Missing cookies or session data

- No mouse movement or scrolling behavior

- Mismatched Canvas/WebGL/device fingerprints

Even with clean IPs, inconsistent fingerprints lead to detection.

4、AI-driven anti-scraping systems

Anti-bot systems now use AI to evaluate:

- Session behavior patterns

- Geographic consistency

- Interaction signals

- CAPTCHA challenges (reCAPTCHA v3, hCaptcha)

Without aligning IP, fingerprint, and behavior, blocking becomes inevitable.

II. Short-Term Workarounds for IP Blocking

1、Reduce request frequency

Lowering request rates can temporarily avoid rate limits.

2、Rotate User-Agent

Switching browser identities can help diversify requests.

3、Simulate cookies and sessions

Maintaining session state improves realism, though limited for public data.

4、Small proxy pools

Using dozens or hundreds of IPs can distribute requests, but cannot scale for large datasets.

These methods are suitable for testing or small-scale scraping, but not for LLM-level workloads.

III. How to Build a Scalable LLM Data Collection Architecture

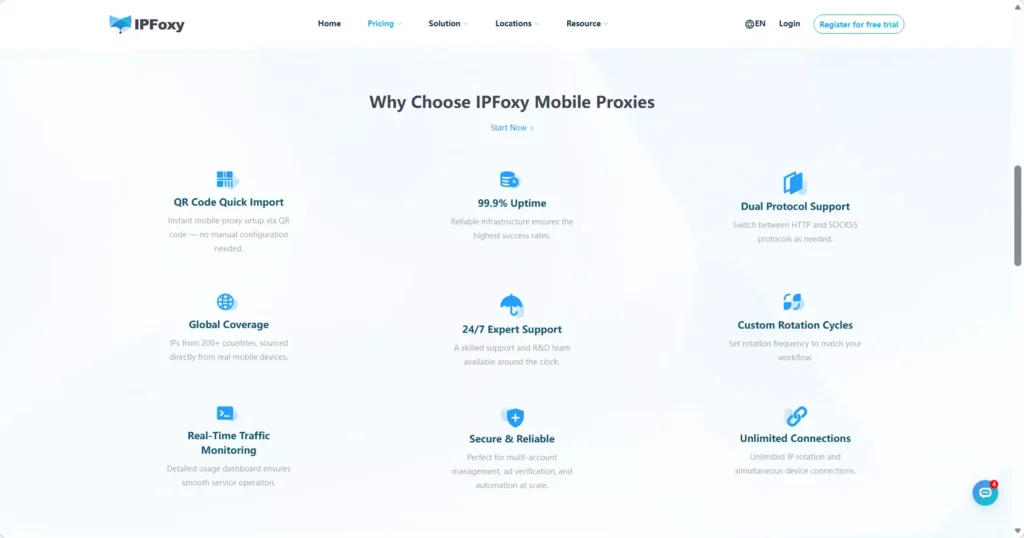

1、Proxy selection: residential vs data center

| Type | Speed | Trust Level | Use Case |

|---|---|---|---|

| Data center proxy | Very high | Very low | Open APIs, low-protection sites |

| Residential proxy | Medium | High | Large-scale LLM data collection |

| Mobile proxy | Medium | Very high | High-security targets |

Residential proxies originate from real user networks, making them significantly harder to detect. For large-scale data collection, residential proxies are the primary choice.

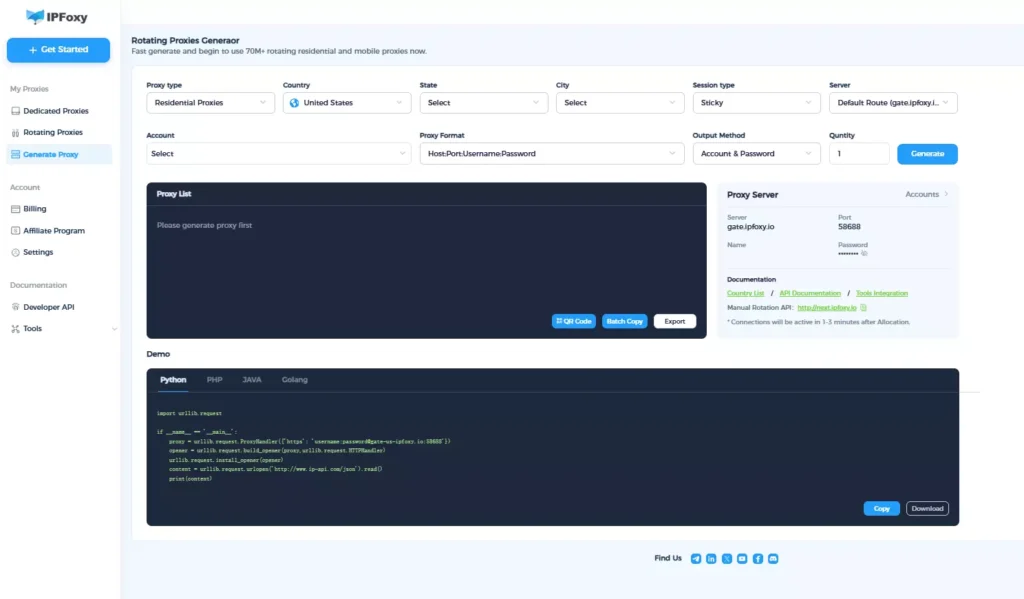

2、IP rotation and session strategy

- Intelligent rotation: Assign a new IP per request to avoid rate limits

- Sticky sessions: Maintain the same IP for 5–30 minutes when handling multi-step tasks like login or pagination

This combination balances anonymity and session stability.

3、Browser fingerprint masking

- Bind each IP to a unique fingerprint

- Use browser automation tools like Playwright or Puppeteer

- Integrate anti-fingerprinting techniques (e.g., stealth scripts)

- Align headers such as User-Agent with IP location

A consistent identity across IP, fingerprint, and behavior is essential.

IV. FAQ

It depends on the target. Data center proxies may work for open APIs, but high-value sources typically block them. Residential proxies provide much higher success rates.

No. Excessive rotation can appear abnormal. Use per-request rotation for independent requests, and sticky sessions for continuous workflows.

Follow key principles: Respect robots.txt,Control request rates,Use legitimate proxy sources,Prefer official APIs when available

V. Summary

In 2026, LLM data collection requires more than simple scripts and proxies. AI-driven anti-scraping systems analyze IP behavior, infrastructure type, and browser identity simultaneously. Without a robust architecture, large-scale scraping becomes unsustainable.

A reliable setup—combining residential proxies, intelligent rotation, and fingerprint consistency—is essential for building scalable and stable data pipelines.