With the explosive growth of AI applications, traditional web crawlers can no longer fully meet the data demands of LLM training, RAG knowledge base construction, and AI Agent automation. This article reviews the most noteworthy LLM web scraping tools in 2026, helping developers and businesses choose the right AI-powered scraping solution.

I. What Is an LLM-Powered Web Scraper?

LLM-powered web scrapers are a new generation of web data extraction tools driven by Large Language Models (LLMs). By 2026, traditional tools such as Python + Scrapy and BeautifulSoup are facing major limitations, accelerating the rise of modern AI scraping solutions:

- JavaScript-heavy websites are now standard, and traditional crawlers often capture only empty HTML shells.

- Cloudflare and WAF anti-bot systems have become much more advanced, with IP bans and CAPTCHA challenges now common.

- Website structures change frequently, making XPath and CSS selector rules fragile and expensive to maintain.

- RAG systems and AI Agents need more than raw HTML — they require cleaned, structured, semantic-ready content.

Unlike traditional crawlers that rely on manually written extraction rules, LLM web scraping tools can automatically understand page structures and directly output structured data suitable for AI workflows. This flexibility gives LLM scrapers major advantages over conventional solutions.

The table below highlights the differences between traditional crawlers and LLM-powered scraping tools.

| Comparison Area | Traditional Crawlers | LLM Web Scraping Tools |

| Content Parsing | XPath / CSS Selectors | LLM Semantic Understanding + Automatic Extraction |

| Dynamic Page Support | Limited (requires extra setup) | Built-in browser rendering |

| Structured Output | Manual rule writing | Automatic JSON / Markdown output |

| Maintenance Cost | High (rules break easily) | Lower (model adapts automatically) |

| Best Use Cases | Stable structured sites | RAG, AI Agents, unstructured content extraction |

| Anti-Bot Capability | Weak | Stronger with proxies and fingerprinting |

II. Best LLM Web Scraping Tools in 2026

1. Firecrawl

Designed specifically for LLM and RAG workflows, Firecrawl can convert almost any webpage into Markdown or structured JSON with a single API call. It includes JavaScript rendering, automatic noise removal, and supports both full-site crawling and single-page scraping.

- Best for: RAG knowledge bases, AI Agent data collection, rapid prototyping

- Pros: Extremely easy integration, high-quality output, highly LLM-friendly, hosted infrastructure included

- Cons: Limited free tier, expensive at scale, less customizable than self-hosted tools

- Suitable for Agent/RAG: Highly recommended

2. Crawl4AI

An open-source AI scraping framework deeply integrated with LLM extraction capabilities. It supports both precise CSS/XPath extraction and semantic AI extraction modes, while offering strong asynchronous performance and local deployment support.

- Best for: Technical developers, private deployments, cost-sensitive projects

- Pros: Fully open source, highly customizable, excellent async performance, Docker support

- Cons: Requires self-managed infrastructure and maintenance

- Suitable for Agent/RAG: Best choice for technical teams

3. Apify

Apify provides thousands of prebuilt Actors (scraping templates) and has recently integrated more AI functionality, including natural language scraping instructions. It also includes scheduling, monitoring, and storage systems.

- Best for: Enterprise-scale data collection, ready-made scraping templates such as LinkedIn, Amazon, and Google Maps

- Pros: Mature ecosystem, large template library, strong scheduling and management features, integrates with AI frameworks like LangChain

- Cons: Higher pricing, less AI-native than Firecrawl

- Suitable for Agent/RAG: Yes, though additional configuration may be needed

4. Browse AI

A no-code AI scraping platform designed for non-technical users. Users can record scraping workflows visually and create monitoring tasks without coding.

- Best for: Operations teams, market analysts, business users without programming experience

- Pros: Easy onboarding, intuitive interface, supports scheduled monitoring and change detection

- Cons: Limited flexibility, weaker handling of complex pages, not ideal for large-scale AI pipelines

- Suitable for Agent/RAG: Suitable only for lightweight workflows

5. ScrapeGraphAI

An open-source AI scraping framework built around graph pipelines and LLM-driven extraction. Users simply describe the data they want in natural language instead of writing extraction rules.

- Best for: Research projects, experimental extraction workflows, prompt-based scraping

- Pros: Natural language driven, integrates with OpenAI and Ollama models

- Cons: Still evolving in terms of stability and performance, use cautiously in production

- Suitable for Agent/RAG: Strong conceptual fit for exploratory projects

6. ZenRows

A scraping API platform focused on anti-bot bypassing. It combines browser rendering, CAPTCHA solving, and other anti-detection capabilities into a single API. Recently, it has also added AI-powered extraction features.

- Best for: Websites with strict anti-bot protection, commercial-scale scraping requiring high success rates

- Pros: Strong anti-bot capability, high scraping success rate, no need to build proxy infrastructure

- Cons: Higher pricing, AI extraction features are secondary rather than core functionality

- Suitable for Agent/RAG: Best used as the data acquisition layer combined with other LLM processing tools

III. How to Choose the Right LLM Web Scraping Solution

Choosing an AI web scraping tool is not only about features — it is about choosing the right combination of capabilities. In 2026, the web environment is more complex than ever. Dynamic rendering, anti-bot systems, and IP blocking can all interrupt scraping workflows. Before selecting a tool, it is important to understand the foundational infrastructure modern AI scrapers rely on.

1. Why Infrastructure Matters for AI Scraping in 2026

- Browser automation is now essential: Modern websites rely heavily on JavaScript rendering. Without a real browser environment, meaningful content often cannot be retrieved. Tools like Playwright and Puppeteer have become core infrastructure for modern LLM web scrapers, simulating real user behavior such as scrolling, clicking, and page interaction.

- Dynamic rendering is critical: In SPA (Single Page Application) architectures, core content is often injected only after the DOM finishes rendering. AI scrapers must wait for rendering completion before extracting valid data, making rendering support mandatory rather than optional.

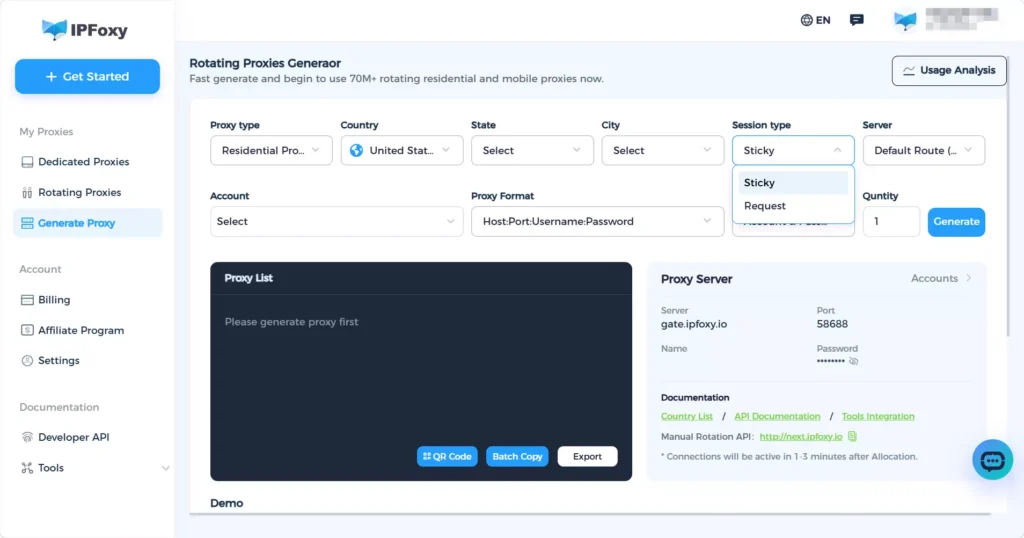

- Why AI scrapers increasingly depend on proxies: IP addresses remain one of the primary anti-bot detection signals. For large-scale, long-running scraping operations, rotating IPs are especially important. Scrapers often generate large numbers of requests, and rotating residential proxies help reduce rate limiting and IP bans while improving scraping stability and success rates. For high-volume scraping workflows, IPFoxy Proxies provides high-quality rotating residential proxy services with flexible IP rotation and geo-targeting support, helping reduce IP bans caused by repeated requests and improving collection efficiency.

- Anti-detect browser environments and anti-bot bypassing: Browser fingerprints are another major detection signal used by anti-bot systems. Modern AI scraping tools increasingly include fingerprint randomization and TLS handshake simulation. Combined with proxies, they create browsing environments that closely resemble real users, helping bypass mainstream anti-bot protections.

Once the infrastructure layer is stable, the next step is selecting the most suitable AI scraping solution based on your use case.

2. Choosing an AI Scraping Solution by Use Case

- Building RAG knowledge bases: Firecrawl and Crawl4AI are the top choices. Firecrawl offers simplicity and high-quality output, while Crawl4AI provides open-source flexibility and private deployment options. Both output LLM-friendly Markdown and integrate smoothly with frameworks like LangChain and LlamaIndex.

- Large-scale data collection: Apify (hosted enterprise solution) or Crawl4AI combined with rotating residential proxies are recommended. At scale, proxy infrastructure and anti-bot strategy matter more than the scraper itself, so proxy planning should be part of the initial architecture.

- Non-technical users: Browse AI is the easiest solution for beginners, suitable for monitoring competitor pricing, collecting job listings, and other recurring business tasks.

- AI Agent automation: Crawl4AI and ScrapeGraphAI provide the best support for Agent-based workflows, including async execution and tool-style integrations compatible with frameworks like AutoGen and CrewAI.

IV. FAQ

No tool can bypass every anti-bot mechanism perfectly. However, combining LLM scraping tools with high-quality residential proxies and reasonable request rate control can solve most common scraping challenges.

Not always, but proxies become almost essential for large-scale or long-running scraping tasks.

Because Agents need real-time access to external information. Tasks such as web search, page analysis, and content extraction all rely on AI scraping capabilities.

V. Conclusion

By 2026, LLM web scraping tools have evolved from experimental technology into production-ready infrastructure.

As AI applications continue to expand, data acquisition capabilities are becoming a core competitive advantage for AI systems. Choosing the right AI scraping solution is one of the most important first steps in building high-quality AI applications.